This blog explains how FP&A can utilise Artificial Intelligence (AI) to make a data-driven forecast of...

Introduction

Introduction

In the early 1990s, I became Finance Manager of a manufacturing operation facing challenges. The UK economy was in recession and the marketplace was changing. Sales forecasts were regularly missed by some 10%. There was too much working capital and hence a shortage of cash. This had to be brought under control, as the position of the business with its suppliers was at risk.

Traditionally, Finance had not contributed to the sales forecast but was responsible for the P&L between sales and profit.

Product - the remanufacture of alternators and starter motors - was highly seasonal; business volume was closely related to the time of year.

I needed a tool that would help to optimise the business environment. This would shift the discussion to control of the future rather than analysis of what had gone wrong in the past. This paper describes how I constructed that tool and how I would do this today using commonly available Machine Learning techniques.

I hope to show the analytical power that is now available to the FP&A practitioner and how it can drive business change in a structured manner; a method that involves considerably less effort than I had to apply all those years ago.

The Machine Learning Paradigm

Machine Learning is a branch of Artificial Intelligence that builds predictive functions by analysing data. It has three subgroups:

Unsupervised learning – ‘What happened?’. This includes techniques such as clustering that can find patterns in data that is not labelled, for example, the determination of market segments form of historical data.

Supervised learning – ‘What is going to happen?’. Data is labelled and we place new data either onto a continuum, for which we use regression, or a discrete category, for which we use classification. In both cases we construct a model that is trained by historical data and used to determine the outcome for new data – for example, to determine the likely sales volume for a particular price, based on the historical relationship between price and volume.

Reinforcement learning – ‘How do we make it happen?’. This models the activity of an agent within an environment, rewarding positive behaviour so that the model evolves an effective solution. Self-driving cars are a sophisticated example.

I shall focus on unsupervised learning (clustering) and supervised learning (regression and classification).

The Business Model

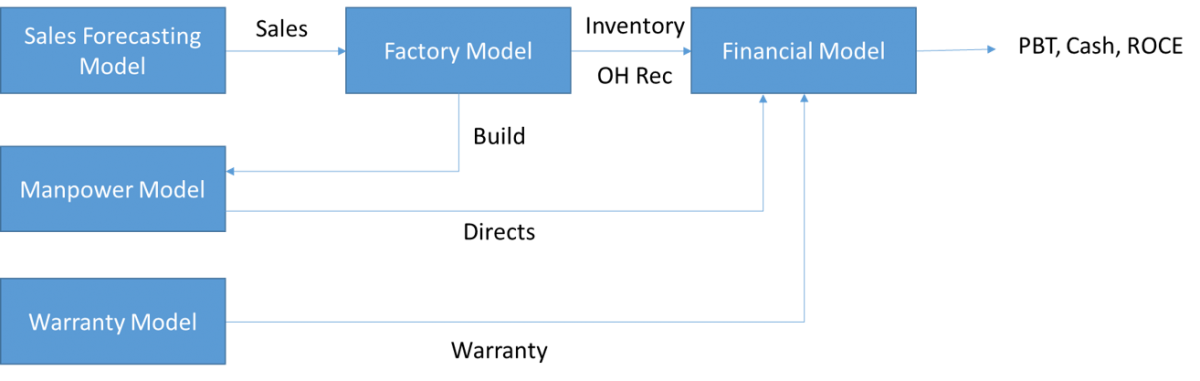

Figure 1: Business Model Schematic

The model created scenarios that forecast PBT, Cash and Return on Capital Employed for a range of inputs. It predicted cash in the short term and showed longer-term trends, helping to determine strategy.

I used a Quattro spreadsheet; this was the only spreadsheet that offered multiple, communicable tabs; different modules could be linked and changes in drivers would ripple through the model.

The Sales Forecasting Model

Then:

I developed a time-series analysis model to calculate sales. It used a 13-dimensional multiple regression – one dimension for trend and 12 to cover the months of the year – in order to separate trend from seasonality. Forecast error was reduced from 10% to 2%. Figure 2 shows an actual report (from 1991) that displays how seasonal the business was and demonstrates the long-term downward trend.

Figure 2: Sales Forecasting Model Output – actual report from 1991

The module required knowledge of statistics, array functions and macro programming.

Now:

I would use the Python programming language and a library such as Facebook’s Prophet. This is simple to apply, understands seasonality and has flexible granularity. The statistical ‘heavy lifting’ is done automatically.

The Factory Model

Then:

The factory model used the sales forecasts to generate build inputs for the manpower model, and inventory and overhead recovery estimates for the financial model. It also calculated estimates of raw material purchases and expected service levels through regression, built using array functions in the spreadsheet.

Now:

I would use the Scikit-learn Python library to make regression a step-by-step process.

It is also a good idea to use the following facilities:

Classification. In those days much time was spent determining A, B and C inventory. ‘A’ items have the highest annual consumption value, ‘B’ items are interclass and ‘C’ items have the lowest consumption value – some of these would be scrapped. I would use existing categories to train a classification model to determine the inventory groupings. This would be checked for exceptions, reducing management meetings.

Clustering, to identify slow-moving or obsolete inventory by the manufacturer. Clusters would be plotted to see how the market was moving. Results would feed into marketing and purchasing strategy.

The Labour Model

The number of direct staff required to meet the projected build is based on known productivity levels and mix.

Then: spreadsheet.

Now: Python and Pandas.

The Warranty Model

Then:

This used lagged time series analysis array functions to determine warranty rates.

Now:

Today I would use a library such as Facebook’s Prophet, and Scikit-learn’s clustering to identify high warranty cost items by age, type and manufacturer. This plotted over time would show how warranty clusters were moving, feeding into marketing and sourcing decisions. If this technology had been available to me then, the lengthy meetings to analyse warranty and identify problematic brands would have been considerably reduced.

The Financial Model

Then:

The complex spreadsheet on a PC.

Now:

Today I would develop the financial model in Python using data libraries such as Pandas, powerful visualisation libraries like Matplotlib and Plotly, and Dash to distribute personalised dashboards to managers. Then, I had to carry a PC around to board meetings – now, the cloud and dashboard distribution really would reduce the ‘heavy lifting’!

Benefits

The scenario planning not only fed into short term production, marketing and purchasing decisions but also helped define business strategy and promote business transformation.

Adopting an AI/ML approach

Then:

The model was built from first principles. It required statistical, spreadsheet and programming skills along with business knowledge. I developed it in tandem with my full-time Finance Manager role. It required time and dedication for its conception, development, testing and presentation.

Now:

Life would be easier. Here is a checklist of steps that I would employ:

Understand the key drivers.

Understand the issues – e.g. the business trends, inventory, warranty.

Identify consumers of finance time.

Get trained in the techniques. There are many excellent distant learning courses in Machine Learning; their prerequisites would be met by someone trained in finance. Such a person would have a good grasp of ML techniques and Python within six months.

Leverage the power of the cloud for data collection and presentation.

Streamline the collection, analysis and presentation of data and make these available to managers to display the effects of different scenarios in models derived from the latest data.

The Skill Set Required

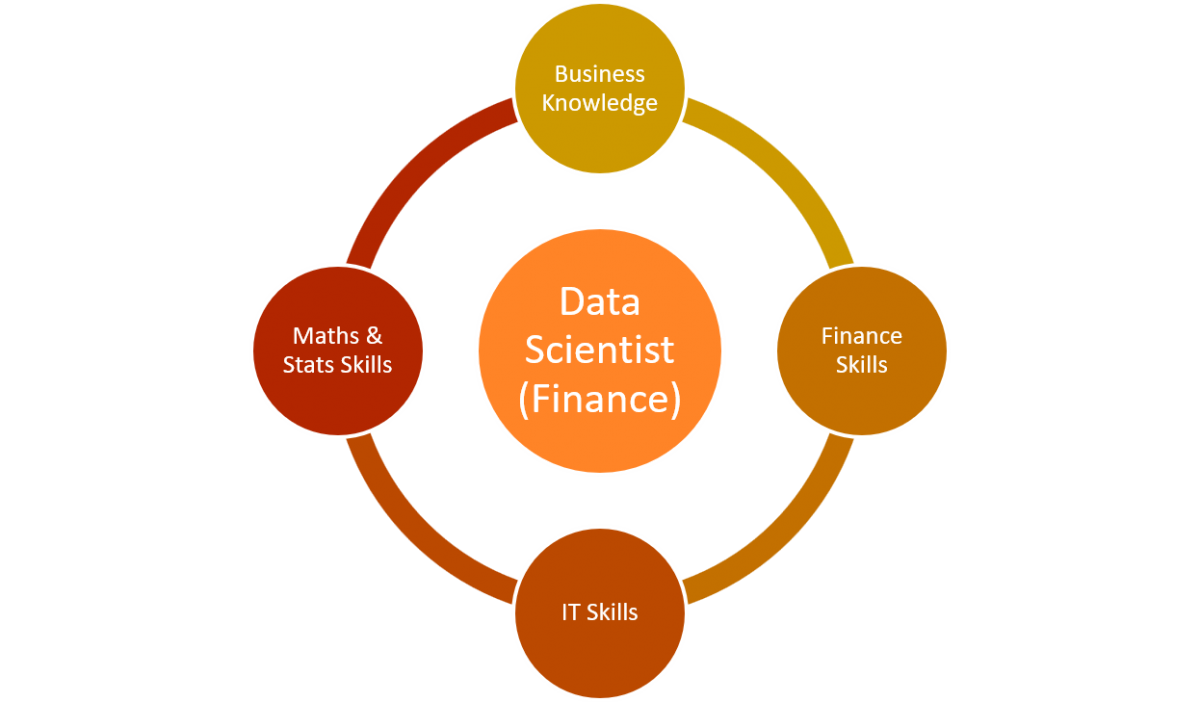

Recruit individuals who are curious and willing to learn. The processes I have described fit well will data lake technology – from the collection, organisation and analysis of data to its easily consumable distribution. Hence the ideal Finance Data Scientist would draw on business knowledge, maths and stats, finance and IT skills – this powerful combination makes a whole greater than the sum of the parts.

Figure 3: Skills for the ideal data scientist

Use consultants for mentoring, training and establishment of the role. The goal is to develop reliable and versatile individuals who could bring this powerful combination of skills and experience to bear on both short and long-term challenges.

Summary

I have described how I met a business challenge in the 1990s. The models gave the management team a common platform to understand trends and discuss scenarios.

Today, the development of computer languages such as Python and its libraries and Machine Learning techniques would simplify and amplify this work.

The FP&A function can take advantage of these techniques now. Discussion among professionals will highlight the potential, and their use is bound to grow.

Subscribe to

FP&A Trends Digest

We will regularly update you on the latest trends and developments in FP&A. Take the opportunity to have articles written by finance thought leaders delivered directly to your inbox; watch compelling webinars; connect with like-minded professionals; and become a part of our global community.