In this article, finance professionals learn which AI tools matter most for FP&A, how to use...

This is the second of a three-part series on getting started with Artificial Intelligence (AI). The first part covered the basics of AI, the key players in the market and how the tools can be used in FP&A.

In Part 2, we now move on to how we use AI tools. We will explore prompts and how to structure them to maximise your output from LLMs.

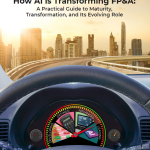

2.1. The R.C.T.F. Framework for Better Prompts

A prompt is text/ instructions/ questions/ data that you input into LLMs to drive an output.

In the early days of AI, there was a lot of talk about Prompt Engineering becoming the next great skill to learn and develop. Back when Foundational Models/ LLMs were in their infancy, it was a specialised skill to be able to ask a question in a way that gave you the most valuable result. As LLMs have evolved, they are no longer as rigid as they once were. LLMs have become better at interpreting what you are asking. However, one old rule still applies. The quality of AI output is directly proportional to the quality of your input.

When I first started my AI journey, the consensus was that the best way to optimise your output was to use the Role-Context-Task-Format framework to write prompts. Whilst this is becoming a little dated, I will describe this framework as it provides a very strong foundation.

Figure 1 shows the traditional R.C.T.F. prompt structure, which was widely used in early AI adoption to structure prompts and improve output quality.

Figure 1. The R.C.T.F. Prompt Framework

The Evolution of Prompting

I mentioned above that this is getting a little dated now. We are seeing the existing tools evolve and new tools emerge. The 2026 generation of LLMs (GPT-5.3, Gemini 3.1, and Claude Opus/ Sonnet 4.6) are much better at "inferring" intent. If you stick strictly to R.C.T.F., you often end up with generic, "average" outputs because you are treating the AI like a template filler rather than a reasoning partner.

This has had a disruptive impact on the way we ‘prompt’. Instead of telling the AI what to do, you now tell it how to think through the problem. I am continually learning about the nuances of each AI platform. Improving output is a continuous learning journey. Using a one-size-fits-all framework like the R.C.T.F. ignores the relative strengths of each LLM.

For example, ChatGPT is the extrovert. It thrives on verbose and narrative. It likes you to talk through the logic. It rarely gets lost in long and detailed prompts. It also thrives on ‘multi-step reasoning’ where you ask it to critique its own first draft for over-optimism. This makes it great for creating Board narratives or strategic brainstorming.

The framework: C.O.R.E (Context, Objective, Reasoning, Evaluation)

How to use it: Provide a deep Context, state the Objective, and then explicitly ask the model to Reason (e.g., "Think through the impact of FX volatility on our EBITDA bridge step-by-step"). Finally, ask it to Evaluate its own answer for risks.

Why it works: GPT-5.3 is less likely to "hallucinate" if it is forced to write out its logic before giving the final number. The reasoning step in C.O.R.E is vital because it prevents the model from jumping to a conclusion before it has processed the context that you have provided.

On the other hand, Gemini is the autistic genius cousin. It hates fluff. It prefers direct instructions and bullet points. It has a massive context window. It is my "go-to" for summarising entire folders of ERP exports or cross-referencing a budget sheet against a 50-page strategy document because it can "read" thousands of rows at once.

The framework: D.A.T.A (Data Source, Action, Technical Constraints, Answer Style)

How to use it: Direct it to specific Data (e.g., "Analyse the 'Revenue' tab in the attached Sheet"), give it a sharp Action ("Identify the top 3 variances"), set Technical constraints ("Exclude intercompany eliminations"), and define the Answer style.

Why it works: Gemini’s massive context window allows it to "read" thousands of rows instantly; it doesn't need a "Role" (like "Act as a CFO") as much as it needs clear data boundaries. The ‘Techical Constraints’ is the most important part of this prompt. It needs to know exactly what to ignore (e.g., InterCo Eliminations) to stay accurate.

Claude is the nerdy professor. I find that the best outputs are from structured prompts, using XML-style tags as signposts.

The framework: X-Structure (XML tagging)

How to use it: Wrap your prompt in tags like this:

<system Instruction> [Draft a variance narrative] </system Instruction>

<Context> [I am preparing a board paper] </context>

<task> [Review each variance item, identify where, search transaction narratives for items that were not in forecast] </task>

<constraints> [Do not mention specific customer names—use 'Client A'] </constraints>

<bridge> [Check your output against the rules above, the answer should read as a complete polished product] </bridge>Why it works: Tagging prevents Claude from getting confused between your data and your instructions, which is vital for long-form FP&A reports. These tags also prevent ‘instruction drift’ during technical accounting reviews or long scenario analysis write-ups. For example, the tags will ensure that when you provide 50 pages of budget data, it doesn't accidentally treat your "Notes" as part of its "Instructions".

One of the biggest risks of AI in FP&A is hallucinations/ context drift. To mitigate this, we use “Chain of Thought” prompting techniques. This forces the AI to document its logic and show its working before providing the final answer. For example, you could add the following to your prompt:

“After providing the calculation, review it for common financial logic errors, such as double-counting intercompany eliminations. Show me where you checked these.”

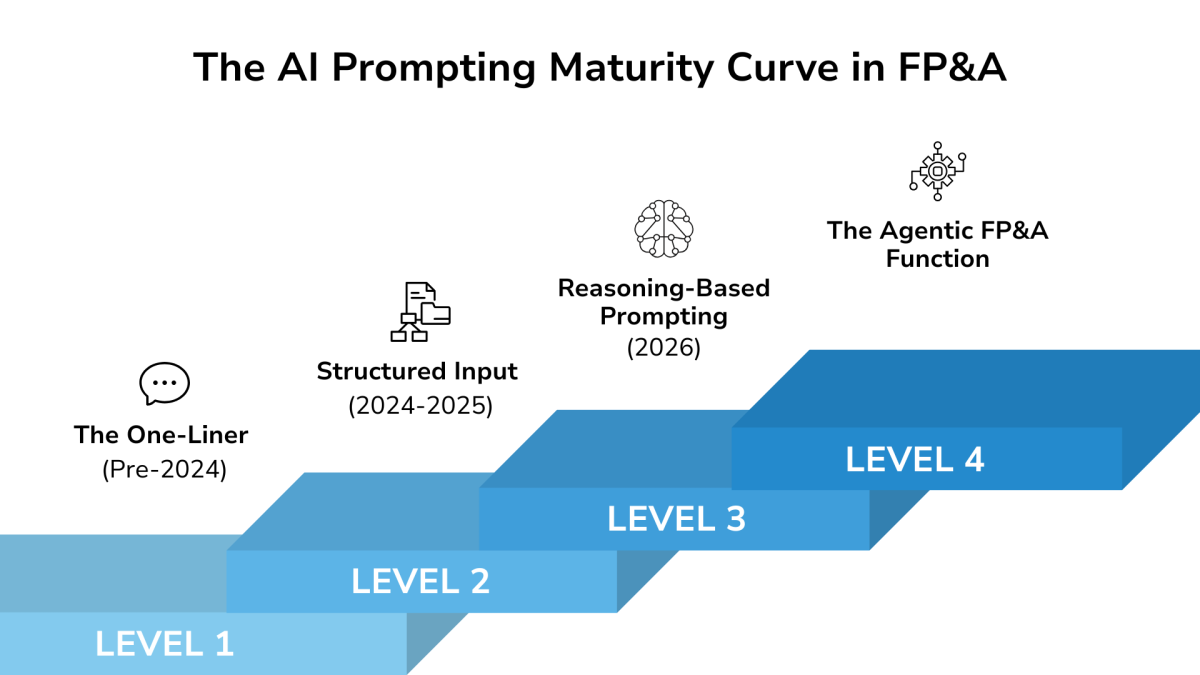

The 2026 Maturity Curve: From Instruction to Reasoning

Figure 2 illustrates how prompting practices in FP&A are evolving from simple instructions toward reasoning-based interaction with AI systems.

Figure 2. The AI Prompting Maturity Curve in FP&A

Level 1: The One-Liner (Pre-2024)

Simple, context-free commands (e.g., "Write a formula for churn") that often led to generic or hallucinated results.

Level 2: The R.C.T.F. Template (2024-2025)

Professionalising the output by providing a Role, Context, Task, and Format. This is currently where the top 10% of finance teams operate.

Level 3: Reasoning-Based Prompting (2026)

Moving beyond instructions to Logic-Based Frameworks. This involves using model-specific structures, such as C.O.R.E. or XML tagging, to ensure the AI reasons through dependencies, risk factors, and data nuances before it speaks.

As we look beyond the horizon of 2026, the "Agentic" era marks the final transition in our maturity curve. While the current shift from basic instructions to Reasoning-Based Prompting is transformative, it is merely the prerequisite for the next leap: Agentic AI.

Level 4: The Agentic FP&A Function

In this "Leading" state, AI moves from a tool you talk to, to a system that "does”. Agentic capabilities allow AI to move beyond the chat interface and interact directly with your financial ecosystem.

Autonomous Execution: Instead of manually anonymising data and pasting it into a prompt, an AI agent will independently pull data from your ERP, verify it against your Excel models, and then flag anomalies before you even start your day.

The Orchestrator Model: Your role as a finance professional will evolve from a Data Processor to an AI Orchestrator. You will no longer spend 46% of your time on data collection (FP&A Trends Survey); instead, you will spend that time designing the "logic guardrails" and "strategic objectives" for your AI agents to follow.

Self-Auditing Systems: Future agents will employ their own internal "Reasoning Frameworks" (like the C.O.R.E. or X-Structure methods you've learned here) to double-check their own work. They will simulate board challenges and stress-test their own forecasts before presenting a final draft for your review.

By mastering these next-level frameworks, you move from simply "using AI" to "augmenting your judgment," ensuring your outputs aren't just faster, but significantly more accurate.

To conclude this section, here is a comparison table of the different models and the use cases they excel in:

Model | Primary Framework | Cognitive Profile | Best FP&A Use Case |

GPT-5.3 | C.O.R.E. | The "extrovert" : Thrives on verbose narratives and deep context; rarely gets "lost" in long prompts. | Drafting board narratives, executive summaries, and "what-if" scenario storytelling. |

Gemini 3.1 | D.A.T.A. | The "data navigator": Hates fluff, prefers direct instructions, and has a massive context window for large datasets. | Analysing 1,000+ row transaction files and cross-referencing multiple internal Google Docs/Sheets. |

Claude 4.6 | X-Structure | The "nerdy professor" : Extremely precise when using XML tags to separate data from instructions. | Technical accounting reviews, complex legal/strategic document synthesis, and highly structured report drafting. |

DeepSeek | Logic-Chain | The "mathematician": Focuses on step-by-step mathematical reasoning and predictive logic. | Complex Power BI DAX coding, building sensitivity models, and auditing model logic. |

Perplexity | Deep Research | The "researcher": Real-time web access with verifiable, clickable citations. | Industry benchmarking, competitor earnings call analysis, and macroeconomic data gathering. |

2.2. Your Action Plan: Start This Week

We have covered the basics of AI and the techniques for using them. The next big leap for you is to put this into practice. Here is your action plan:

Day 1: Set up your toolkit

Create accounts on one of ChatGPT, Gemini, or Claude as well as and Perplexity. Check with your IT department about any enterprise versions already available to you.

Day 2-3: Practice with low-risk tasks

Use last month's variance analysis (anonymised) to practice generating commentary. Have a play with different prompting styles and compare the output. Try Perplexity for a benchmarking question you've been meaning to research.

Day 4-5: Apply to real work

Draft your next board pack executive summary with AI assistance. Generate Excel formulas for a model you're building. Create a first draft of forecast documentation.

Week 2 onwards: Build the habit

Identify one task each day where AI could accelerate your work. Track time saved. Share wins with your team.

2.3. Conclusion

FP&A has always been about translating numbers into decisions. AI doesn't change that, it accelerates it.

The FP&A professionals who thrive will be those who use AI to eliminate the 80% of time spent on data gathering and formatting. They will free themselves to focus on what actually matters: business partnership, strategic insight, and decision support.

You already have the commercial judgment. You already understand your business. Now you have the tools to communicate that understanding faster and more effectively than ever before.

Start small. Protect sensitive data absolutely. Always verify outputs.

The future of FP&A is the combination of humans, augmented by AI technology, outperforming everyone else.

The final part of this series will be a set of common prompts you can use to improve your FP&A tasks.

Subscribe to

FP&A Trends Digest

We will regularly update you on the latest trends and developments in FP&A. Take the opportunity to have articles written by finance thought leaders delivered directly to your inbox; watch compelling webinars; connect with like-minded professionals; and become a part of our global community.