In this article, the author explains how AI in FP&A can evolve from automation to a...

1. Introduction

1. Introduction

Part 1 set out an Artificial Intelligence (AI) maturity framework for finance, and Part 2 described the human-AI collaboration model. This Part 3 paper describes a reference architecture for governed decision support using my Home Hedge Fund (HHF) project as an example implementation.

The process I follow is: generate options, test them against policy, and produce an evidence pack for accountable approval. This operating model is most relevant to organisations at Stages 4 to 6 of the maturity framework, which I described in Part 1, where AI shapes material decisions under explicit governance.

For CFOs and FP&A leaders, the value is repeatable, policy-gated and an auditable aid to decision-making without surrendering accountability.

This paper draws on my experience over many years as an audit manager, FP&A manager, and systems developer. The HHF derives from my quant training, but the principles are applicable to FP&A projects, to minimise unclear decision rights, weak controls and thin evidence.

Executive framing:

Performance is a result; governance is the product. |

2. Why This Matters to FP&A Leaders

My thesis is that AI can support material decisions without breaking decision rights, auditability, and control.

- Decision rights: option generation is separated from approval; nothing is released without gates and sign-off where required.

- Safety: stress or scenario gates, risk limits, and release-readiness gates enforce policy before anything is deployable.

- Auditability: the suite produces an evidence pack; every run is fingerprinted (data, parameters, code version).

- Improvement: post-run logging and Bayesian learning allow cumulative improvement from eligible runs.

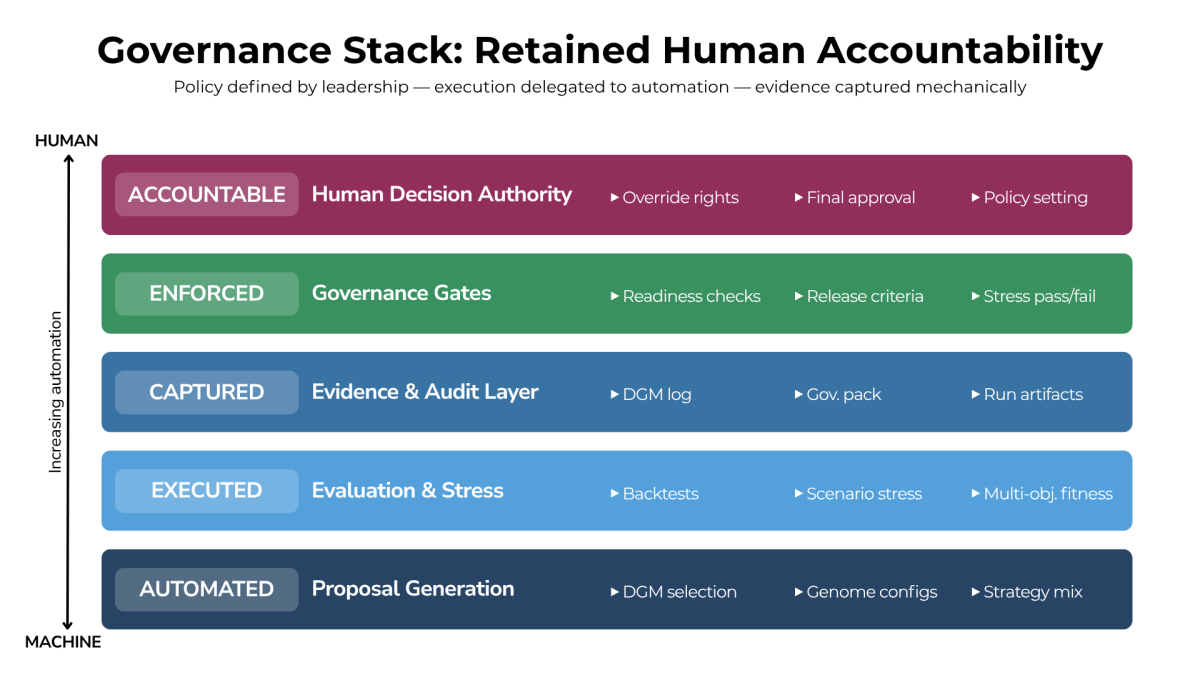

3. Governance Operating Model and Decision Rights

The suite is a governance stack: policy is set by leadership, execution is automated, evidence is captured mechanically, but accountability is retained by humans.

The operating principle is: propose, do not impose. AI may generate options and evidence, but human decision rights and accountability remain explicit.

The process makes a key distinction:

- Research champion: this is the best-performing configuration in a research context.

- Deployable candidate: a configuration that also passes hard gates (stress or scenario, evidence completeness, operational readiness, and release permissions).

Only deployable candidates matter: the system blocks release when gates are not met.

Figure 1: The Governance Stack and Retained Human Accountability

The following are vital for effective governance:

- Make materiality and risk appetite explicit before optimisation begins;

- Name and log override authority;

- Do not bypass the stress or scenario pass, artefact completeness or operational reliability;

- Make sure model upgrades are version-controlled, reversible and promoted only after observed stability;

- Treat reporting or alerting failures as control breaches and stop the process until they are resolved.

The reference architecture that operationalises these requirements is described in Section 4.

What a management report must prove:

Section 7 specifies the evidence pack; this dashboard summarises run readiness at a glance. |

4. Suite Overview: Reference Architecture

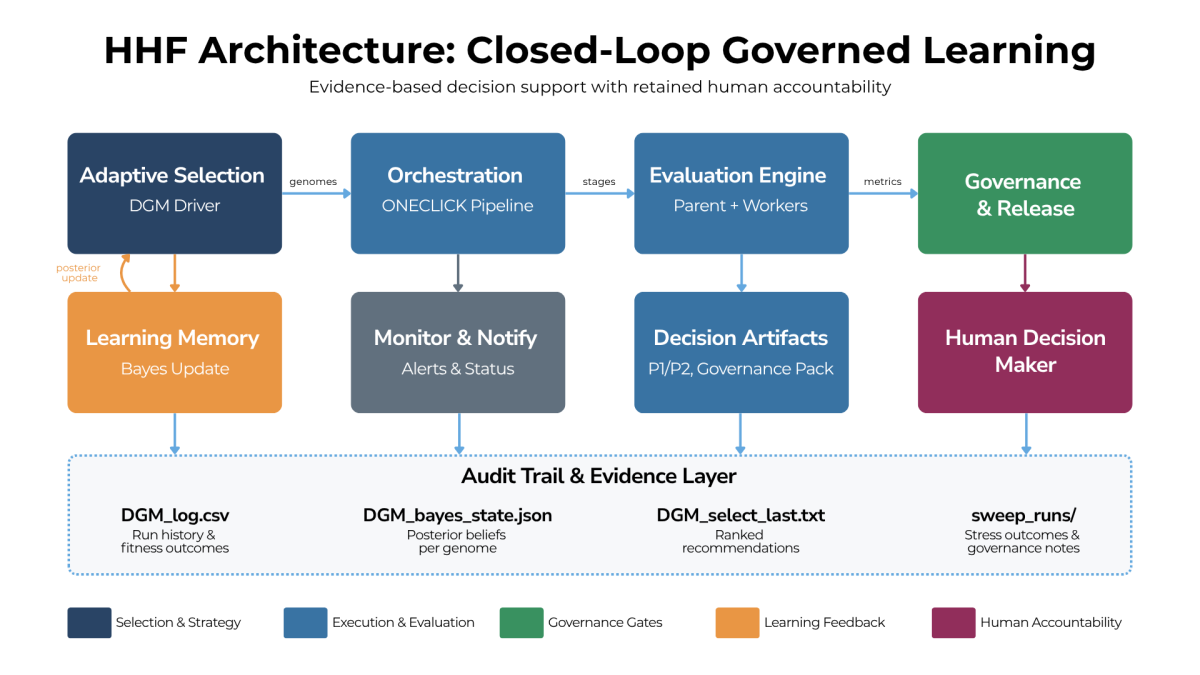

The HHF is a closed-loop architecture. The Darwin Gödel Machine (DGM) selects candidate configurations (called "genomes": parameter sets evolved through selection) to test. A controlled execution stack evaluates them and policy gates decide what can proceed. Post-run logging updates the learning state only from eligible runs that satisfy data and artefact integrity requirements.

The architecture is generator-agnostic: options may be produced by optimisation over parameter sets ("genomes") or by AI recommendation engines. Governance is enforced at the same two points: what may be learned and what may be actioned.

Figure 2 illustrates how these components interact within a closed-loop learning system.

Figure 2. Reference HHF Architecture and Closed Learning Loop

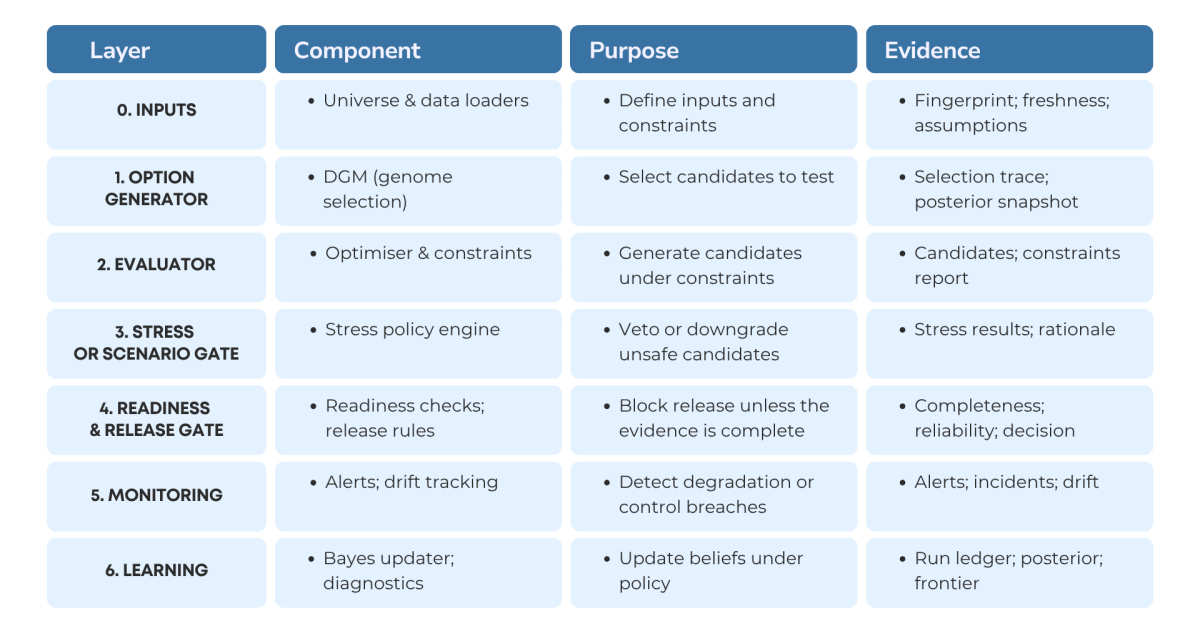

To make the architecture operational, each layer must have clearly defined responsibilities, controls, and evidence outputs. The table below summarises how governance is enforced across the stack.

Figure 3. Operating Layers, Components, and Evidence in the HHF Framework

The system keeps a record of what worked under the policy and updates which options it tests next.

5. End-to-End Operating Flow

While the table defines the static roles and controls of each layer, the following flow shows how they operate together in practice.

A standard run follows this sequence:

- The selection engine proposes candidate configurations based on strategy and posterior state.

- The execution stack runs controlled sweeps and produces candidate decisions.

- The stress or scenario policy determines the outcome (pass, fallback, or veto).

- Deployment readiness gates verify artefact completeness and operational status before any release path.

- The governance pack and monitoring outputs are generated.

- The learning updater logs the run metrics and then updates posterior parameters for eligible runs.

- Run-level diagnostics capture failure classes, control outcomes, and reproducibility fingerprints.

This design gives repeatability (standard flow), adaptability (learning), and control (hard gates plus audit artefacts).

Figure 2 shows how this flow fits within the closed-loop architecture.

6. Model Risk, Operational Resilience, and Change Control

The failure taxonomy should separate data, policy, and pipeline classes because the remediation paths differ.

Operational controls such as preflight checks, lightweight iteration modes, and graceful stop rules promote efficiency while preserving evidence integrity.

For CFOs, a governed loop earns trust only if it fails safely, explains itself and can be reproduced.

Separate policy failures from engineering failures: distinguish failing gates (policy failures) from exceptions, missing data, or engineering incidents.

Stop-the-line criteria: if artefacts are missing, alerts fail, or data freshness is breached, the run cannot be treated as decision evidence.

Fingerprint every run: log code version, configuration, and data stamps so results can be reproduced and audited.

Governed change control: give parameters and scripts a version, document intended effects, and retain rollback capability.

Monitoring and reporting are controls: if they fail, that is a control failure, not a cosmetic issue.

These controls are documented and attested in the CFO evidence pack described in Section 7.

7. The CFO Evidence Pack (How Recommendations Are Approved)

Every recommendation should ship with a standard evidence pack that an executive can sign. This turns model output into decision support that is governed.

- Executive summary: recommendation, expected upside, downside, and decision horizon.

- Policy compliance statement: which gates were applied and the pass or fail rationale.

- Stress or scenario outcomes: performance under adverse conditions and key sensitivities.

- Assumptions and inputs: data sources, freshness, cost assumptions, and constraints.

- Lineage and reproducibility: code version, parameter set, run fingerprint, and rerun protocol.

- Alternatives considered: short-listed options, not just a single winner.

- Decision log: who approved, who overrode, and why.

Machine-readable artefacts:

- Run ledger: History of evaluated runs and outcomes.

- Posterior state: Current beliefs per configuration.

- Selection summary: Ranked recommendation and rationale.

- Trade-off frontiers: Explicit risk v return trade-offs.

- Per-run folders: Stress or scenario outcomes, governance notes, and full decision lineage.

8. Where This Fits in the FP&A Calendar

To make this useful to FP&A, embed it in existing forums rather than creating a parallel "AI process". Typical fit points:

Rolling forecast: generate and stress test scenario ranges, not single-point forecasts.

Budget season: enforce risk appetite and constraint discipline across competing plans.

Capex prioritisation: generate option sets under capital constraints, stress test downside cases and document trade-offs.

Pricing and margin: evaluate alternative actions under demand uncertainty with controlled sensitivities.

Working capital: propose actions and evaluate trade-offs against liquidity stress scenarios.

Risk refresh: periodically recalibrate stress or scenario policies and document the rationale for governance.

Policy thresholds should be version-controlled and reviewed through change control; examples include stress thresholds, decision hurdles, and data-quality budgets.

9. Practical Adoption Pattern for FP&A Organisations

9.1 Phase 1: Controlled Pilot

- Recommendation-only pilot: Operate in recommendation-only mode.

- Evidence-first validation: Validate the evidence pack, gate discipline and traceability before optimising speed.

- Ownership and roles: Assign the policy owner (finance), model steward, and operational runbook owner.

9.2 Phase 2: Structured Integration

- Forum integration: Use monthly or quarterly FP&A forums for review and approval.

- Standing governance agenda: Make policy thresholds and gate settings a standing agenda item (thresholds are the values; gate settings are how the thresholds are enforced).

- Overrides and escalation: Define override protocol and escalation paths.

9.3 Phase 3: Scaled Governed Learning

- Scale after stability: Increase run frequency only when controls are stable.

- Optional layers: Introduce gradually and only with measured incremental benefit.

- Upgrade discipline: Treat upgrades as governed change events.

10. Limitations and Responsible Use

- Uncertainty remains: The system structures uncertainty; it does not remove it.

- Learning depends on foundations: Data quality, stable policy, and artefact integrity.

- Operational reliability is first-order: Evidence is only as strong as reproducibility and notification delivery.

- Overfitting risk: Optimisation can overfit if thresholds drift without rationale.

- Experimental modules are off-by-default: Treat optional modules as R&D; enable only after explicit approval and measured benefit.

- Accountability stays human: Accountability cannot be delegated to model outputs.

11. Closing

Across the three-part series, the argument is consistent: Part 1 defined governance as the unlock, Part 2 demonstrated propose-test-approve collaboration in practice, and Part 3 provides the operating blueprint that makes governed collaboration repeatable. The governing principle remains propose, do not impose: AI proposes options and evidence; humans decide and remain accountable. In this model, performance is a result; governance is the product. The HHF shows this in practice.

11.1 What to Do on Monday Morning

A five-step FP&A start:

|

Subscribe to

FP&A Trends Digest

We will regularly update you on the latest trends and developments in FP&A. Take the opportunity to have articles written by finance thought leaders delivered directly to your inbox; watch compelling webinars; connect with like-minded professionals; and become a part of our global community.