In this article, the author examines how FP&A teams can implement trustworthy agentic AI by matching...

Artificial Intelligence (AI) adoption has flatlined, yet the pressure to adopt continues to rise every single day. According to L.E.K. Consulting's 2025 CFO Survey, 60% of CFOs believe AI will be among the most transformative technologies for their function, but only 11% are using it today and 35% are still stuck in pilots. [1]

This paper identifies key friction points based on researched materials and real-world feedback from senior leaders across BFSI, FMCG, Digital Media, Energy, Industrials and Aviation, primarily from large multinationals. The lens is Finance, but the logic spills over to the enterprise level.

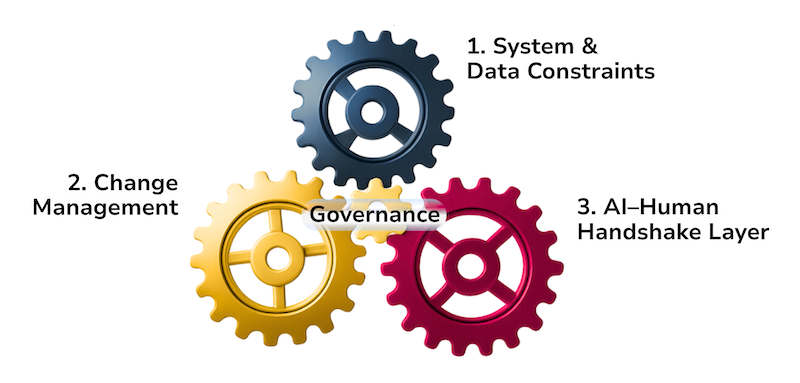

Adoption in Finance is being slowed by three interlocking friction points, and one non-negotiable precondition, i.e., Governance.

Figure 1. The AI Adoption Gap in Finance: Key Friction Points

Each friction point is examined below, covering what leaders are experiencing and how to navigate it. Before engaging with any of the three, Governance is the frame. It defines accountability, ensures ethical deployment, and maintains firm-wide visibility to contain risk. AI workflows should be risk-rated and documented like any other critical process, not treated as a separate experiment running outside the firm's existing control framework.

1. System & Data Constraints

Data is where it starts, what you own, and the only ground AI can build on. Yet for most finance functions, this ground is far from ready.

This is clearly reflected in FP&A Trends Survey responses: when asked about the biggest issues in planning and forecasting processes, respondents highlighted complex and inconsistent data (16%), outdated systems and disconnected workflows (15%), the lack of a single trusted data source (11%), and data that is often outdated and untimely (5%). Together, these issues point to a planning environment that is still far from AI-ready.

Taken together, these responses point to the same underlying problem: legacy and fragmented systems and workflows create the foundational bottleneck. Replacing core systems carries real risk. Data quality and unification issues persist. And integration limitations slow every pilot before it reaches scale.

Addressing these constraints requires a pragmatic and phased approach:

Avoid binary thinking. The threshold is not data perfection but a trusted financial source of truth.

Improve data integrity in parallel with AI deployment rather than indefinitely postponing adoption.

Replace core systems where strategically feasible. Where not viable, introduce an architectural layer, either built in-house or outsourced.

2. Change Management

Fear of job displacement is real and largely unaddressed. AI is perceived as a substitute for human judgment rather than a complement. Training and awareness gaps remain, and leadership has yet to provide clarity on what comes next and what the new world of Finance looks like.

This is not just a perception — broader CFO feedback shows that 35% cite a lack of awareness and 40% point to limited understanding of practical AI use cases as key barriers to adoption. [1]

To move from hesitation to adoption, finance teams need to focus on the following:

Leadership is prepared to set direction, invest wisely, sponsor and relay a vision that the team can trust.

Training mapped to role depth — different tools and capabilities for different functions, not a one-size-fits-all approach.

Own the narrative. AI is a tool, not a replacement. The judgment stays human, forever

3. AI–Human Handshake Layer

Not fully understanding what AI does for your specific context is itself a risk. Security concerns around embedding AI remain largely unresolved. There is no clear protocol for when humans must step in or override.

And AI hallucination at critical deadlines is an operational risk that is rarely stress-tested.

To get ahead of these risks, finance teams need to:

Define the handshake layer explicitly — where AI output stops, and human responsibility begins, whether at operational, oversight or approval level.

Manual override must be built in, not bolted on — designed at every critical checkpoint with people who know it well. Not data scientists, but finance professionals with real IT aptitude who can reverse read the neural logic when something fails.

Know your fallback before you need it. Pilots and passengers all need an exit plan, not because they expect failure, but because diligence demands it.

Conclusion

The leaders I spoke to were at very different stages of their AI adoption journey - some evaluating, some starting, and a few midway. But unsurprisingly, the pain points kept converging. That is not a coincidence.

It suggests that these three friction points are structural, and they will find you. So make a measured start. You do not need to tackle all three simultaneously, but you do need a concrete plan for all three from day zero and the discipline to execute in phases.

Three steps to begin:

Start with alignment, not implementation. System, People, Governance and the AI Handshake Layer must point in the same direction.

Set a realistic timeline. Build it as a milestone roadmap with feedback loops.

Define your ROI upfront. Hours saved, redeployed capacity, sharper decisions, or simply keeping pace with a market that is not waiting.

Sources:

L.E.K. Consulting, 2025 CFO Survey: https://www.lek.com/insights/hea/us/ei/lek-consultings-2025-office-cfo-survey-study-ai-ocfo

2025 FP&A Trends Survey: https://fpa-trends.com/fp-research/fpa-trends-survey-2025-ambition-execution-how-leading-fpa-teams-turn-insights-impact

Subscribe to

FP&A Trends Digest

We will regularly update you on the latest trends and developments in FP&A. Take the opportunity to have articles written by finance thought leaders delivered directly to your inbox; watch compelling webinars; connect with like-minded professionals; and become a part of our global community.